At least six fields of research today advanced robotics structure: one that relates the robot with its environment, the behavioral, cognitive, or developmental epigenetics, the evolutionary and biorrobótica. It’s a big field of interdisciplinary study that relies on the mechanical, electrical, electronics and informatics, as well as physical science, anatomy, psychology, biology, zoology and ethology, among others. The basis of this research is embodied Cognitive Science and the New AI. Its purpose: lighting intelligent and autonomous robots that reason, behave, evolve and act like people. By Sergio Moriello.multidisciplinary study, which relies largely on the engineering (mechanical, electrical, electronics and computers) and science (physics, anatomy, psychology, biology, zoology, ethology, etc.).. Refers to highly complex automated systems that have an articulated mechanical structure, governed by an electronic control system, and characteristics of autonomy, reliability, versatility and mobility.

At least six fields of research today advanced robotics structure: one that relates the robot with its environment, the behavioral, cognitive, or developmental epigenetics, the evolutionary and biorrobótica. It’s a big field of interdisciplinary study that relies on the mechanical, electrical, electronics and informatics, as well as physical science, anatomy, psychology, biology, zoology and ethology, among others. The basis of this research is embodied Cognitive Science and the New AI. Its purpose: lighting intelligent and autonomous robots that reason, behave, evolve and act like people. By Sergio Moriello.multidisciplinary study, which relies largely on the engineering (mechanical, electrical, electronics and computers) and science (physics, anatomy, psychology, biology, zoology, ethology, etc.).. Refers to highly complex automated systems that have an articulated mechanical structure, governed by an electronic control system, and characteristics of autonomy, reliability, versatility and mobility.

In essence, the “autonomous intelligent robots” are dynamic systems consisting of an electronic controller coupled to a mechanical body. Thus, these machines require adequate sensory systems (to perceive the environment in which they operate), a precise mechanical structure adaptable (to have a certain physical skills of locomotion and manipulation) of complex effector systems (for running the assignments) and sophisticated control systems (to carry out corrective actions when necessary) [Moriello, 2005, p. 172].

Situated Robotics (Situated Robotics)

This approach deals with robots that are embedded in complex and often dynamically changing [Mataric, 2002]. It is based on two central ideas [Florian, 2003] [Muñoz Moreno, 2000] [Innocenti Badano, 2000]: robots) “are embodied” (embodiment), ie, have a suitable physical body to experience its environment so direct where their actions have immediate feedback on their own perceptions, and b) are situated “(situatedness), ie, they are embedded within an environment, interact with the world, which directly influences-its-on behavior.

Obviously, the complexity of the environment has a close relationship with the complexity of the control system. Indeed, if the robot has to react quickly and intelligently in a dynamic and challenging environment, the problem of control becomes very difficult. If the robot, however, need not answer quickly, reducing the complexity required to develop control.

Within this paradigm, there are several subparadigmas: the “Behavior-based robotics,” the “cognitive robotics”, the “epigenetic robotics”, the “evolutionary robotics” and “biomimetic robotics.

Behavior-Based Robotics and Behavior (Behavior-Base Robotics)

This approach uses behavioral principles: robots generate a behavior only when stimulated, ie respond to changes in their local environment (as when someone accidentally touches a hot object). Here, the designer divides tasks into many different basic behaviors, each of which runs on a separate layer of the control system of the robot.

Typically, these modules (behaviors) may be to avoid obstacles, walking, lifting, etc.. The intelligent features of the system, such as perception, planning, modeling, learning, etc.. emerge from interaction between the various modules and the physical environment where the robot is immersed. The system-control-Fully distributed incrementally builds, layer by layer, through a process of trial and error, and each layer is only responsible for basic behavior [Moriello, 2005, p. 177 / 8].

The behavior-based systems are capable of reacting in real time, as calculated directly from the actions of perceptions (through a set of correspondence rules “situation-action). It is important to note that the number of layers increases the complexity of the problem. Thus, a very complex task may be beyond the ability of the designer (it was hard to define all the layers, their interrelationships and dependencies) [Pratiharas, 2003].

Another drawback is that due to the presence of several individual behavior and dynamics of interaction with the world, it is often difficult to say that a series of actions in particular has been the product of a particular behavior. Sometimes several behaviors simultaneously working or are exchanging rapidly.

Although intelligence may reach the insect, probably built systems from this approach have limited skills, as they have internal representations [Dawson, 2002]. Indeed, this type of robots present a great difficulty to execute complex tasks and in the simplest, no guarantee the best solution as optimal.

Cognitive Robotics (Cognitive Robotics)

This approach uses techniques from the field of Cognitive Science. It deals with deploying robots that perceive, reason and act in dynamic environments, unknown and unpredictable. Such robots must have cognitive functions that involve high-level reasoning, for example, about goals, actions, time, cognitive states of other robots, when and what to perceive, learn from experience, and so on.

For that, they must possess an internal symbolic model and their local environment, and sufficient capacity for logical reasoning to make decisions and to perform the tasks necessary to achieve its objectives. In short, this line of work is responsible for implementing cognitive characteristics in robots, such as perception, concept formation, attention, learning, memory, short and long term, etc.. [Bogner, Maletic, Franklin, 2000].

If we achieve that the robots themselves develop their cognitive abilities, is avoid the “hand” for every conceivable contingency task or [Kovacs, 2004]. Also, if the robots is achieved using representations and reasoning mechanisms similar to that of humans, could improve human-computer interaction and collaborative work. However, it needs a high processing power (especially if the robot has many sensors and actuators) and lots of memory (to represent the state space).

Epigenetic Robotics and Development

This approach is characterized in that tries to implement control systems of general purpose through a long process of development or self-autonomous organization. As a result of interaction with their environment, the robot is able to develop different-and increasingly complex-perceptual skills, cognitive and behavioral.

This is a research area that integrates developmental neuroscience, developmental psychology and robotics located. Initially the system can be equipped with a small set of behaviors or innate knowledge, but, thanks to the experience-is able to create more complex representations and actions. In short, this is the machine to independently develop the skills appropriate for a given particular environment transiting through the different stages of their “autonomous mental development.

The difference between robotics and robotics development epigenetic-sometimes grouped under the term “ontogenetic robotics (ontogenetic robotics) – is a subtle thing, as regards the type of environment. Indeed, while the former refers only to the physical environment, the second takes into account the social environment.

The term epigenetic (beyond the genetic) was introduced in psychology, “by Swiss psychologist Jean Piaget to describe his new field of study that emphasizes the individual sensorimotor interaction with the physical environment, rather than take into account only to genes. Moreover, the Russian psychologist Lev Vygotsky supplemented this idea with the importance of social interaction.

Researchers in robotics from the University of California at Davis have developed a control system which allows the robots to collect evidence suggesting that their leader is about to turn, predict where it will go and then follow him. “This is a fundamental problem in robotics,” says Sanjay Joshi, associate professor mechanical and aeronautical engineering at UC davis. Indeed, whether walking down the street, while driving on the highway or in many other situations, the man often collected deliberate signals and unconscious clues in order to predict what the others and act accordingly. The robots, however, more difficult to coordinate so, for example when the leader of a group turns a corner and disappears from the field of vision of its congeners. Studies in behavioral psychology have shown that a person is about to turn unconsciously a brief nod in the direction it is preparing to borrow so that others can follow.

Researchers in robotics from the University of California at Davis have developed a control system which allows the robots to collect evidence suggesting that their leader is about to turn, predict where it will go and then follow him. “This is a fundamental problem in robotics,” says Sanjay Joshi, associate professor mechanical and aeronautical engineering at UC davis. Indeed, whether walking down the street, while driving on the highway or in many other situations, the man often collected deliberate signals and unconscious clues in order to predict what the others and act accordingly. The robots, however, more difficult to coordinate so, for example when the leader of a group turns a corner and disappears from the field of vision of its congeners. Studies in behavioral psychology have shown that a person is about to turn unconsciously a brief nod in the direction it is preparing to borrow so that others can follow. The robot exhibition held from October 21 to 23, 2004 in Santa Clara, the heart of Silicon Valley is always a good opportunity to take stock of a rapidly evolving sector. This exhibition, the largest of its kind, brings together the Convention Center of town all that is best in robots. Thousands of professionals and enthusiasts jostle for three days on the stands and conferences to identify trends and see the main news.

The robot exhibition held from October 21 to 23, 2004 in Santa Clara, the heart of Silicon Valley is always a good opportunity to take stock of a rapidly evolving sector. This exhibition, the largest of its kind, brings together the Convention Center of town all that is best in robots. Thousands of professionals and enthusiasts jostle for three days on the stands and conferences to identify trends and see the main news.  The realization of robots inspired snake occupies a prominent place in robotics over the past decade. But it has hitherto been difficult to accurately reproduce the movements of the reptile. A skill that researchers SINTEF have managed to emulate a system composed of Aiko robot and a virtual double of the snake which allows experiments on computer. Unlike most of its predecessors, this snake does not need wheels to be able to move with ease. For if the recent addition facilitate the removal, by converting the twisting motion in a continuous slip, he moves better on smooth surfaces. But the interest is to use this type of machine in the affected areas. “In a collapsed building where there are lots of rubble, for example after an earthquake, a wheeled snake would probably be stuck,” says Aksel Transeth researcher at SINTEF.

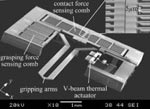

The realization of robots inspired snake occupies a prominent place in robotics over the past decade. But it has hitherto been difficult to accurately reproduce the movements of the reptile. A skill that researchers SINTEF have managed to emulate a system composed of Aiko robot and a virtual double of the snake which allows experiments on computer. Unlike most of its predecessors, this snake does not need wheels to be able to move with ease. For if the recent addition facilitate the removal, by converting the twisting motion in a continuous slip, he moves better on smooth surfaces. But the interest is to use this type of machine in the affected areas. “In a collapsed building where there are lots of rubble, for example after an earthquake, a wheeled snake would probably be stuck,” says Aksel Transeth researcher at SINTEF. Robots at the nanoscale can already do some basic manipulation. But it remained difficult to control to enable them to perform more complex actions such as handling of nanoelectronic components or cells. To overcome this problem, a team of University of Toronto (Canada) announced having developed a pair of robotic grippers can move independently in the middle of a microscopic environment, without damaging the surrounding components. The principle is simple: these micro robots are endowed with the sense of touch. “The robots are equipped with load cells that allow them to perceive their environment through touch,” said L’Atelier Philippe Bidaud, director of the Institute for Intelligent Systems and Robotics (ISIR). “And therefore include data such as weight of an object, the resistance of a membrane,” says he.

Robots at the nanoscale can already do some basic manipulation. But it remained difficult to control to enable them to perform more complex actions such as handling of nanoelectronic components or cells. To overcome this problem, a team of University of Toronto (Canada) announced having developed a pair of robotic grippers can move independently in the middle of a microscopic environment, without damaging the surrounding components. The principle is simple: these micro robots are endowed with the sense of touch. “The robots are equipped with load cells that allow them to perceive their environment through touch,” said L’Atelier Philippe Bidaud, director of the Institute for Intelligent Systems and Robotics (ISIR). “And therefore include data such as weight of an object, the resistance of a membrane,” says he. Jerome Damelincourt leader Store Robopolis, has agreed to give us his vision of robotics, he meets a world forever. At a time when Asimo serving coffee and talk to the caller, one wonders in what direction the robot moves. The Workshop – Hello Jerome Damelincourt. People come from afar to come and visit Robopolis. How did you get the idea to open a shop dedicated to robots? Jerome Damelincourt – In 2000, I decided to create the website vieartificielle.com. Before his success and public enthusiasm, I opened Robopolis in 2003. To mark the opening of the shop, I also created a website dedicated robopolis.com. It was a dream that I never let go.

Jerome Damelincourt leader Store Robopolis, has agreed to give us his vision of robotics, he meets a world forever. At a time when Asimo serving coffee and talk to the caller, one wonders in what direction the robot moves. The Workshop – Hello Jerome Damelincourt. People come from afar to come and visit Robopolis. How did you get the idea to open a shop dedicated to robots? Jerome Damelincourt – In 2000, I decided to create the website vieartificielle.com. Before his success and public enthusiasm, I opened Robopolis in 2003. To mark the opening of the shop, I also created a website dedicated robopolis.com. It was a dream that I never let go. The Flame robot was already able to move through a process similar to that of a human being. A team of University of the Basque country now wants to give the droids ability to move independently and adapt to their environment. His robot Tartalo, has a navigation system allowing it to move freely in confined spaces such as apartments, even if it has not been scheduled for housing in particular. This is indeed able to adapt to changes in space. A camera positioned at eye level allows him to perceive its environment. The computer is equipped has been programmed to recognize four different areas: bedroom, corridor, lobby and no door. When placed in a new environment, he made several Featured order to identify and memorize the location of each piece.

The Flame robot was already able to move through a process similar to that of a human being. A team of University of the Basque country now wants to give the droids ability to move independently and adapt to their environment. His robot Tartalo, has a navigation system allowing it to move freely in confined spaces such as apartments, even if it has not been scheduled for housing in particular. This is indeed able to adapt to changes in space. A camera positioned at eye level allows him to perceive its environment. The computer is equipped has been programmed to recognize four different areas: bedroom, corridor, lobby and no door. When placed in a new environment, he made several Featured order to identify and memorize the location of each piece. RYOBOT is my Rug-Warrior-based robot. It stands about 8 inches high, and is about 6 inches in diameter. It has two-motor differential drive, a 360 degree bump skirt, and the full complement of sensors from the Rug Warrior design. The logic is powered by 4 AA cells and the drive is powered by six rechargeable 2-volt D cells in two batteries of three each.

RYOBOT is my Rug-Warrior-based robot. It stands about 8 inches high, and is about 6 inches in diameter. It has two-motor differential drive, a 360 degree bump skirt, and the full complement of sensors from the Rug Warrior design. The logic is powered by 4 AA cells and the drive is powered by six rechargeable 2-volt D cells in two batteries of three each.

This robot is built using a 6-wheel motorized toy called the BOSS (Battery Operated Spin System) which was available at toy stores a few years ago for about $120. The unit included wheels, gear motors, batteries and charger. The plastic stuff was tossed and a wooden base was attached and multiple decks made from sheets of aluminum. The power electronics including the batteries and motor drivers are on the bottom deck, the computer is on the second deck and the sensors are on the top deck. The computer is a standard PC/XT motherboard which controls the 2 DC motors using the parallel printer port. Software resides on a 3.5″ floppy and automatically boots the robot program which was written in Quick-Basic V4.5. The software has the ability to read a joystick and record motions then play back the motions. This teach-and-repeat technique works well for short robot competitions where the task is well defined. Overall cost was about $450. Email questions to roger@robotics.com

This robot is built using a 6-wheel motorized toy called the BOSS (Battery Operated Spin System) which was available at toy stores a few years ago for about $120. The unit included wheels, gear motors, batteries and charger. The plastic stuff was tossed and a wooden base was attached and multiple decks made from sheets of aluminum. The power electronics including the batteries and motor drivers are on the bottom deck, the computer is on the second deck and the sensors are on the top deck. The computer is a standard PC/XT motherboard which controls the 2 DC motors using the parallel printer port. Software resides on a 3.5″ floppy and automatically boots the robot program which was written in Quick-Basic V4.5. The software has the ability to read a joystick and record motions then play back the motions. This teach-and-repeat technique works well for short robot competitions where the task is well defined. Overall cost was about $450. Email questions to roger@robotics.com Robot arm commanded by a microcomputer.

The processor used is a onechip processor from Intel (80c196KB).

12 MHz, 32K RAM, 16K ROM.I use an IBM PC compatible computer to send command sequences via the serial port (LPT1)

to the 80c196KB.The 80c196KB transformes the command sequences to pulses which make the robot to move.

The PC program is a simulator. You can make a simulation and see how the robot will move.

The program language I used to program the 80c196KB and the PC was C.

I used Playwood and aluminium to build the robot.

The robot is commanded by four R/C servos (HITEC HS-300).

Specifications:

- A robot-arm commanded by a microcomputer.

- The microcomputer: 16Kb ROM, 32Kb RAM, 12MHz.

- The microprocessor in the microcomputer is a onechip processor made by Intel, 80C196KB.

- A program in a compatible IBM PC simulates the robot-arm's movement.

- The command-sequences are then sended to the micorcomputer via COM port.

- The microcomputer translates the commands and makes the robot to move.

- The robot: Is a four axes robot and has four R/C servos (HITEC HS300).

- One for the base, one for the choulder, one for elbow and

one for the gripper.

- Materials used to build the robot are Playwood and Aluminium.

Hardware required for the PC's program :

- A PC based on Intel 80386 or highter.

- SVGA graphic for GUI.

- MS_DOS as OP.

- Communication port (COM1 or COM2) for sending/taking data

to/from the robot.

Robot arm commanded by a microcomputer.

The processor used is a onechip processor from Intel (80c196KB).

12 MHz, 32K RAM, 16K ROM.I use an IBM PC compatible computer to send command sequences via the serial port (LPT1)

to the 80c196KB.The 80c196KB transformes the command sequences to pulses which make the robot to move.

The PC program is a simulator. You can make a simulation and see how the robot will move.

The program language I used to program the 80c196KB and the PC was C.

I used Playwood and aluminium to build the robot.

The robot is commanded by four R/C servos (HITEC HS-300).

Specifications:

- A robot-arm commanded by a microcomputer.

- The microcomputer: 16Kb ROM, 32Kb RAM, 12MHz.

- The microprocessor in the microcomputer is a onechip processor made by Intel, 80C196KB.

- A program in a compatible IBM PC simulates the robot-arm's movement.

- The command-sequences are then sended to the micorcomputer via COM port.

- The microcomputer translates the commands and makes the robot to move.

- The robot: Is a four axes robot and has four R/C servos (HITEC HS300).

- One for the base, one for the choulder, one for elbow and

one for the gripper.

- Materials used to build the robot are Playwood and Aluminium.

Hardware required for the PC's program :

- A PC based on Intel 80386 or highter.

- SVGA graphic for GUI.

- MS_DOS as OP.

- Communication port (COM1 or COM2) for sending/taking data

to/from the robot.